VibeShare is a set of experimental interactive technologies for XR live entertainment that shares “non-verbal emotions between performer and viewer” that were difficult to convey through conventional live streaming.

Index

- Functions

- ⏳TimeShift

- 🧭Maptop

- Demo

- Resources

- Publications

- Contact

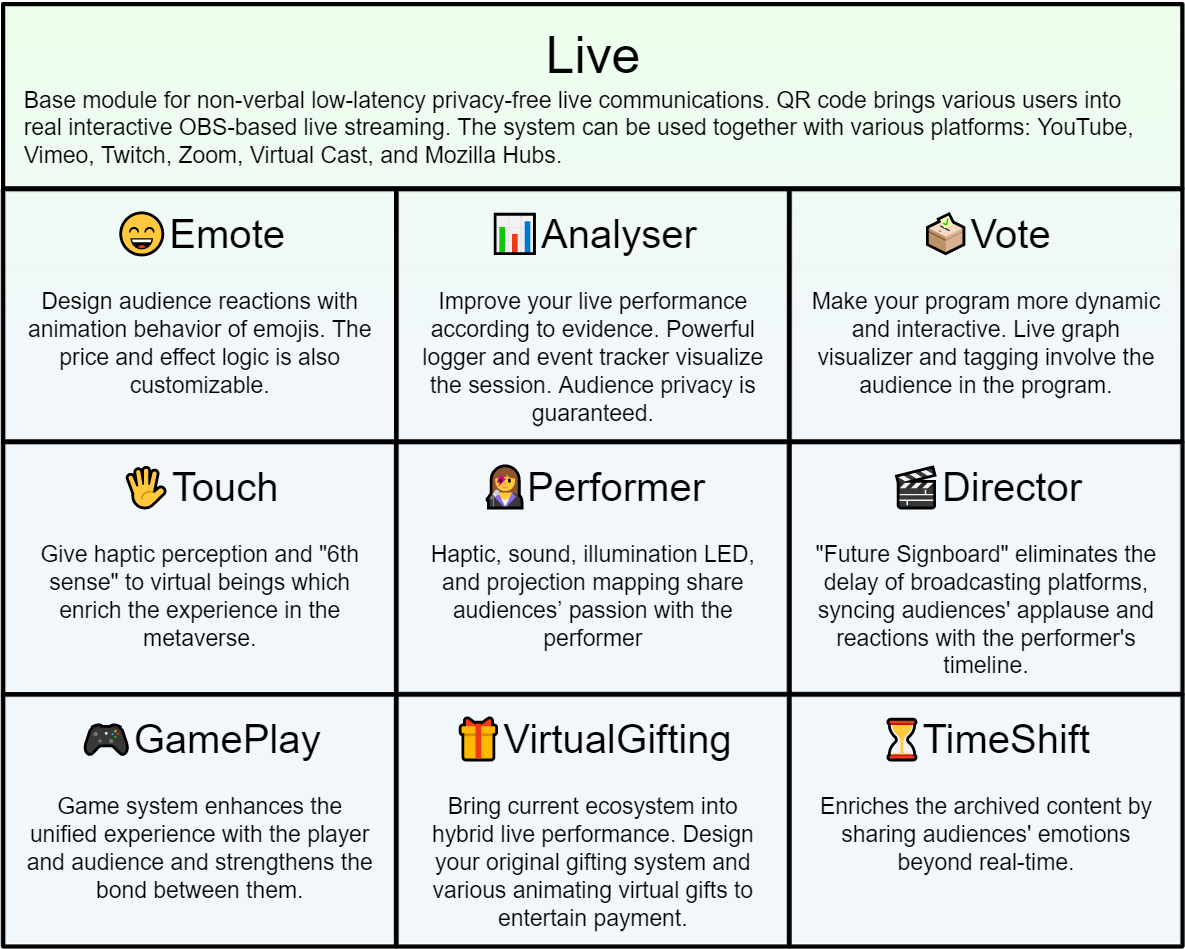

Functions Overview

VibeShare many functions to enhance the relationship between performer and audiences.

Live

Base module for non-verbal low-latency privacy-free live communications. QR code brings various users into real interactive OBS-based live streaming. The system can be used together with various platforms: YouTube, Vimeo, Twitch, Zoom, Virtual Cast, and Mozilla Hubs.

😄Emote

Design audience reactions with animation behavior of emojis. The price and effect logic is also customizable.

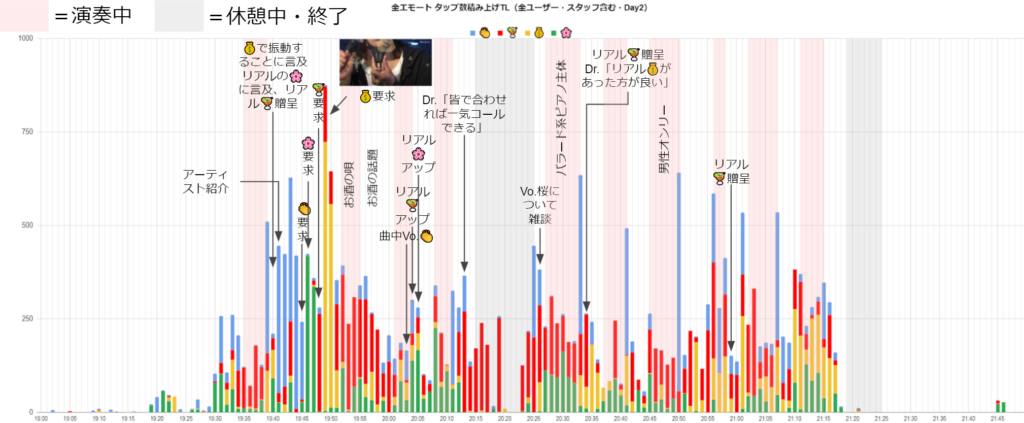

📊Analyzer

Improve your live performance according to evidence. Powerful logger and event tracker visualize the session. Audience privacy is guaranteed.

🗳️Vote

Make your program more dynamic and interactive. Live graph visualizer and tagging involve the audience in the program.

🖐Touch

Give haptic sense and “6th sense” to virtual beings.

Two VTubers acquire haptic sensation in a virtual world. They enjoyed the haptic feelings of shoulder tapping, hugging, and explosion in the movie. Furthermore, they acquire the “6th sense,” in which they can feel the presence of an invisible object.

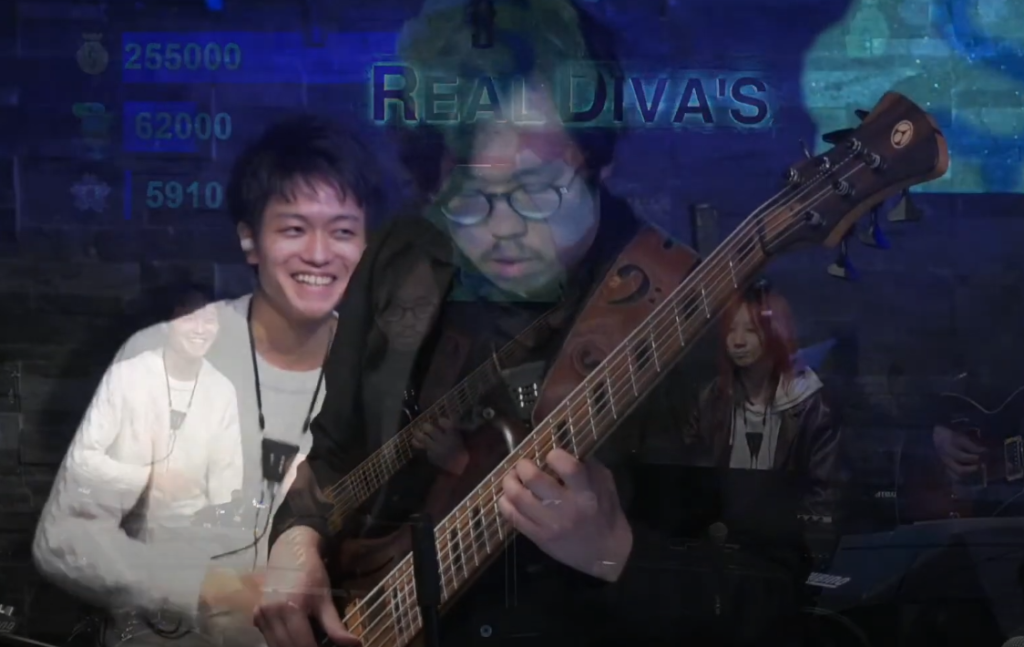

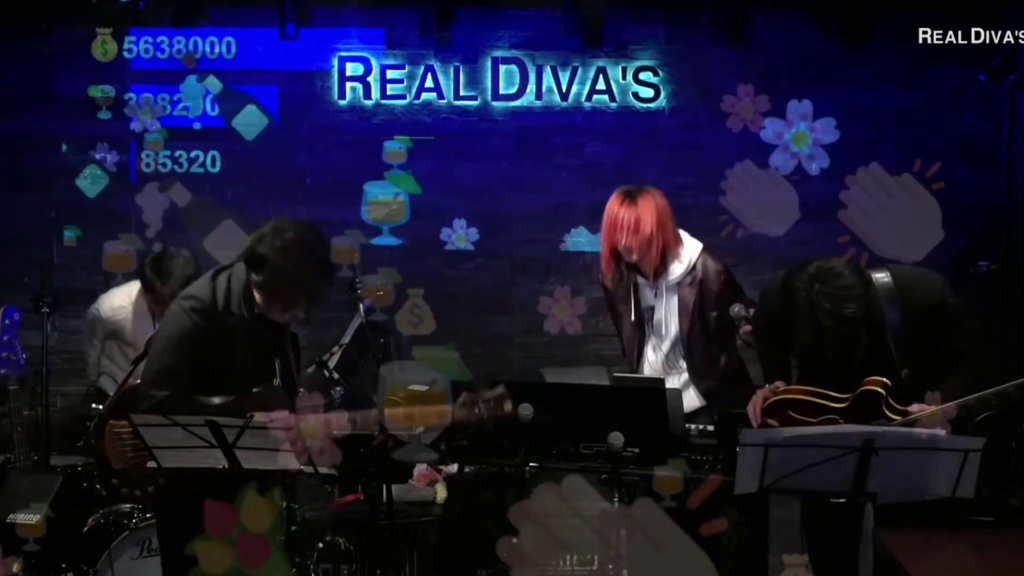

👩🎤Performer

Haptic, sound, illumination LED, and projection mapping share audiences’ passion with the performer

Please see the video version of this scene on YouTube.

🎬Director

“Future Signboard” eliminates the delay of broadcasting platforms, syncing audiences’ applause and reactions with the performer’s timeline.

Please see the video version of this scene on YouTube.

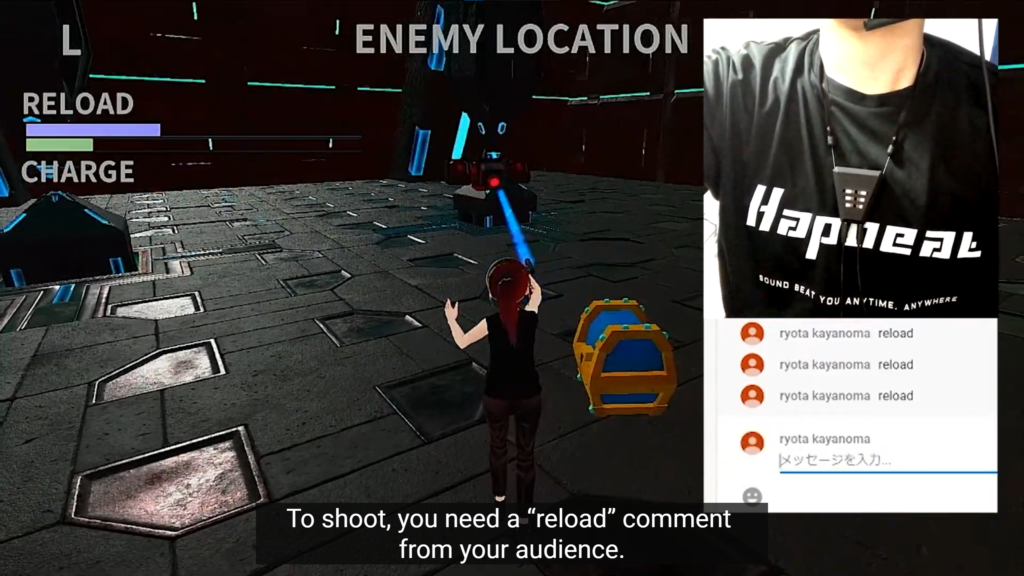

🎮GamePlay

Game system enhances the unified experience with the player and audience and strengthens the bond between them.

The player, therefore, should ask the viewer to give him/her comments to clear the game, which grows the bond between them.

🎁VirtualGifting

Bring current ecosystem into hybrid live performance. Design your original gifting system and various animating virtual gifts to entertain payment.

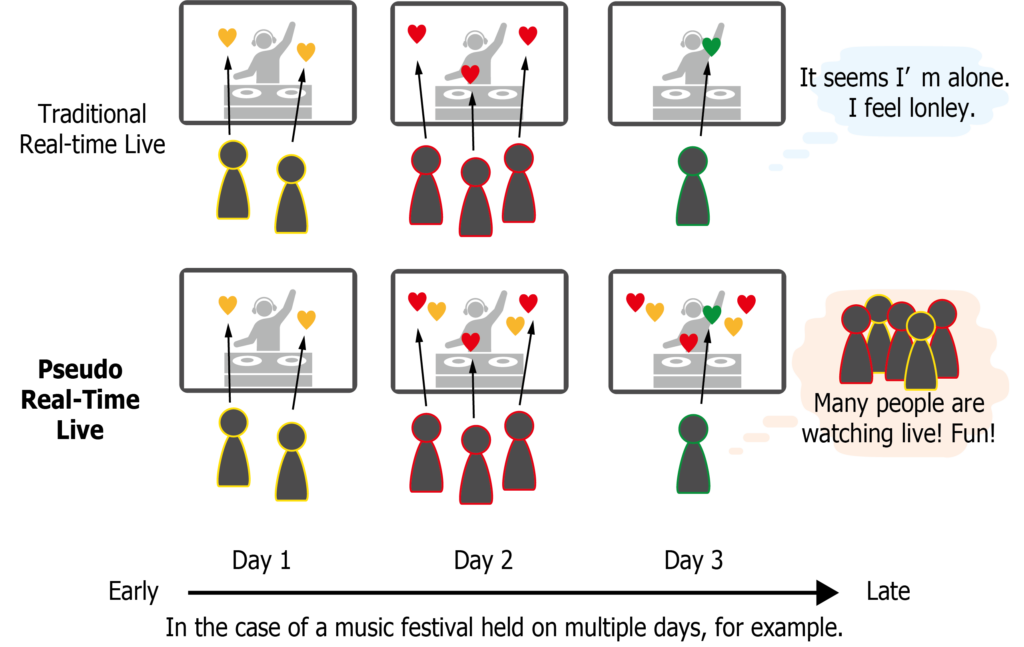

⏳TimeShift

Enriches the archived content by sharing audiences’ emotions beyond real-time.

🧭Maptop

Map based XR Metaverse game system and for educational workshop.

Demo

We created and demonstrated several specific applications in events, conferences, lectures, and facilities.

The detail is here: Page link to Demo (written in Japanese)

Resources

Related Publications

- 山崎勇祐(REALITY株式会社/東京工業大学大学院), 白井暁彦「VibeShare::Performer — Emoji・触覚・音効によるオンライン音楽ライブの双方向化」, 第26回日本バーチャルリアリティ学会大会 (2021/9/21). {Web} {PDF}

- 山崎勇祐, 白井暁彦, 「VibeShare: Vote ~オンラインでの出演者と観客の非言語コミュニケーションの実現~」, 映像表現・芸術科学フォーラム2021(Expressive Japan 2021), [Abstract], [Slides], [SlideShare] (2021/3/8)

- 白井暁彦, 「Virtual Cast と Hapbeat を使った国際双方向アバター触覚ライブの開発」, グリー技術書典部誌2020年春号

- 山崎勇祐, 白井暁彦, 「首の触知覚を用いたナビゲーションシステムの提案」, 第24回日本バーチャルリアリティ学会大会論文集, 2019-9-11 [PDF]

- Yusuke Yamazaki, Shoichi Hasegawa, Hironori Mitake, and Akihiko Shirai. 2019. Neck strap haptics: an algorithm for non-visible VR information using haptic perception on the neck. In ACM SIGGRAPH 2019 Posters (SIGGRAPH ’19). Association for Computing Machinery, New York, NY, USA, Article 60, 1–2. DOI: https://doi.org/10.1145/3306214.3338562

Contact

Please feel free to contact us via email at jp-gi-vr@gree.net